SNNCutoff

I unified my phd work into an open-source SNNCutoff, which provides an efficient framework for both training and evaluation of spiking neural networks (SNNs). The training process is enhanced through regularization techniques, while the evaluation module enables efficient assessment of SNN cutoffs or adaptive timesteps.

Overview

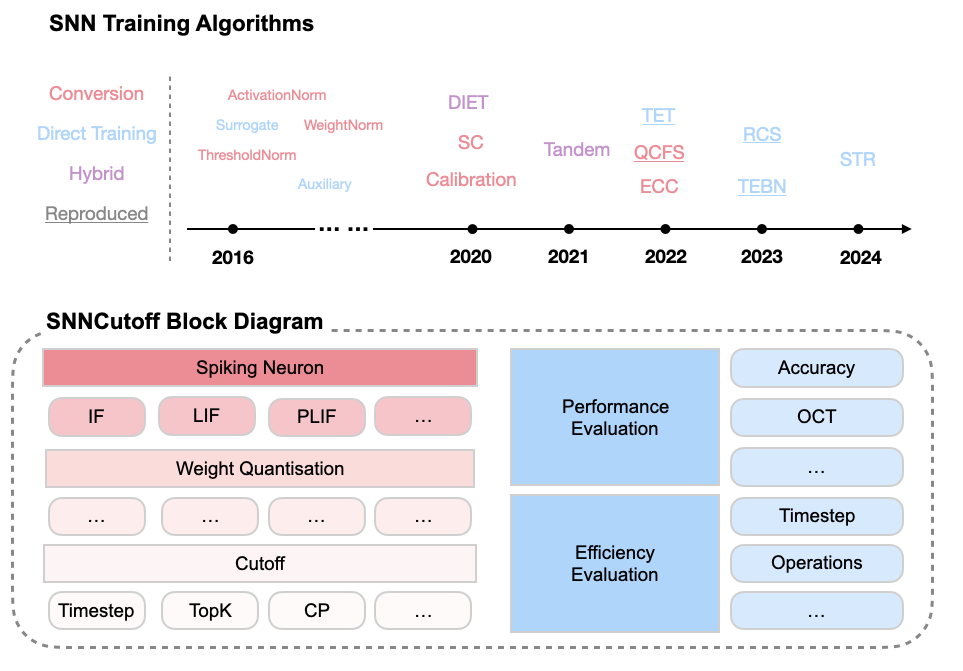

- SNN Training Algorithms:

- SNN Training Regularization:

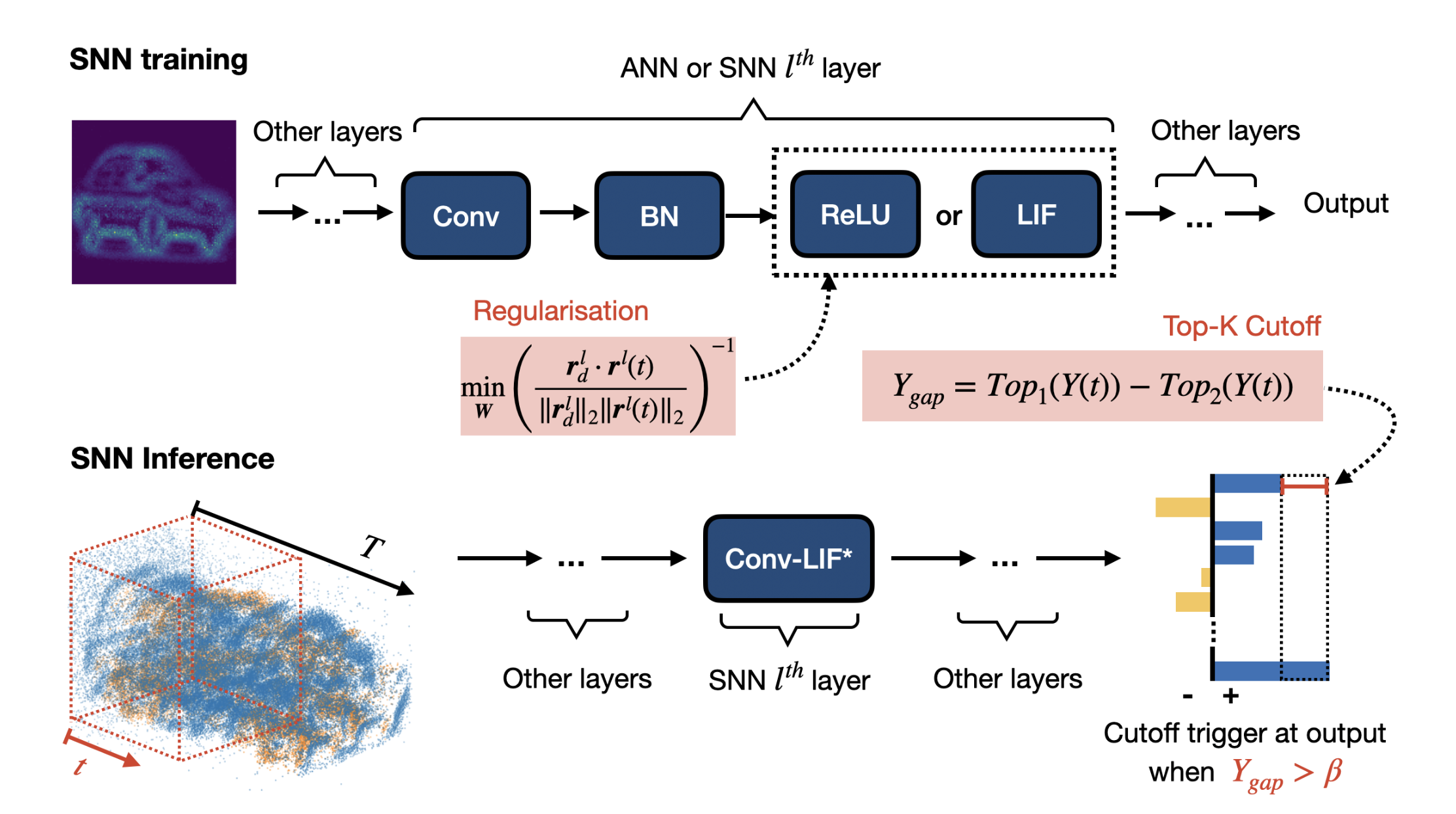

- Regularization of Cosine Similarity (Wu et al., 2025)

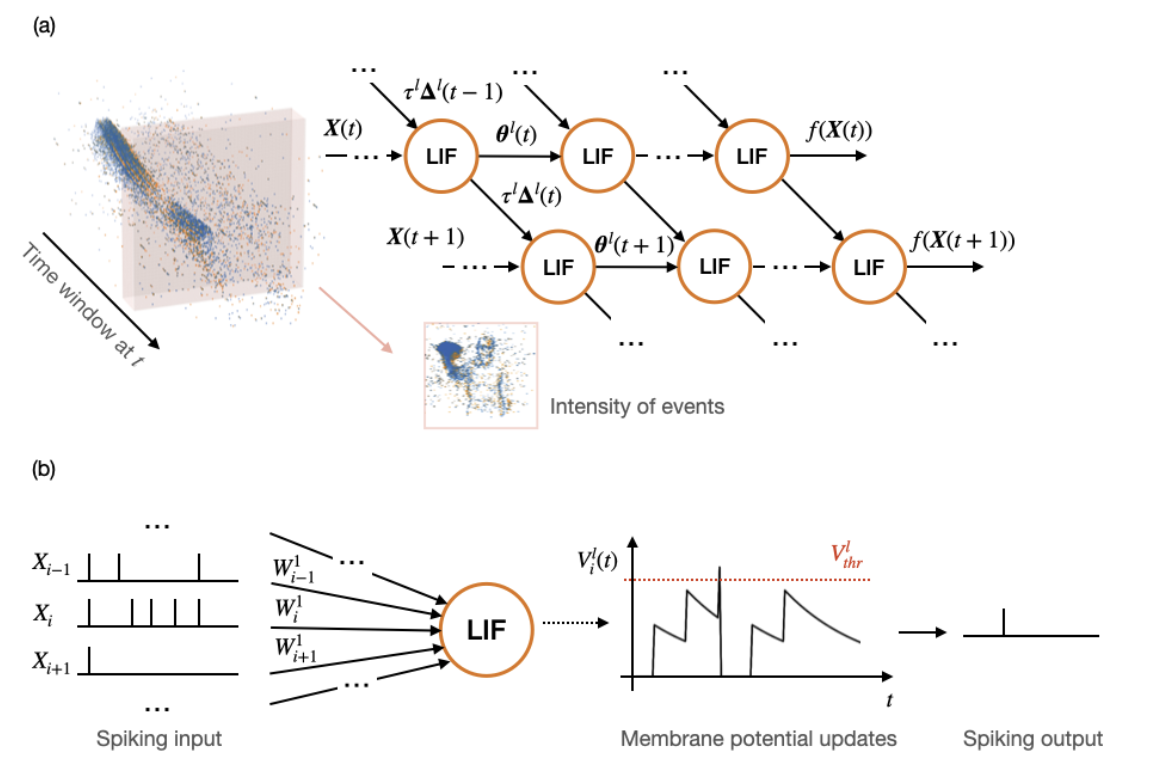

- Spatial Temporal Regularization (Wu et al., 2024)

- Cutoff Approximation:

- Timestep (Baseline): Cutoff triggered using fixed timestep.

- Top-K: Cutoff triggered using the gap between the top-1 and top-2 output predictions at each timeste (Wu et al., 2025).

- Softmax-based: Optimal softmax-based cutoff after STR (Wu et al., 2024).

Tutorials

SNNCutoff provides training and evaluation examples in scripts. Please find more details in Documentation.

References

2025

-

Frontiers in neuroscience, 2025

Frontiers in neuroscience, 2025

2024

-

arXiv preprint arXiv:2405.00699, 2024

arXiv preprint arXiv:2405.00699, 2024